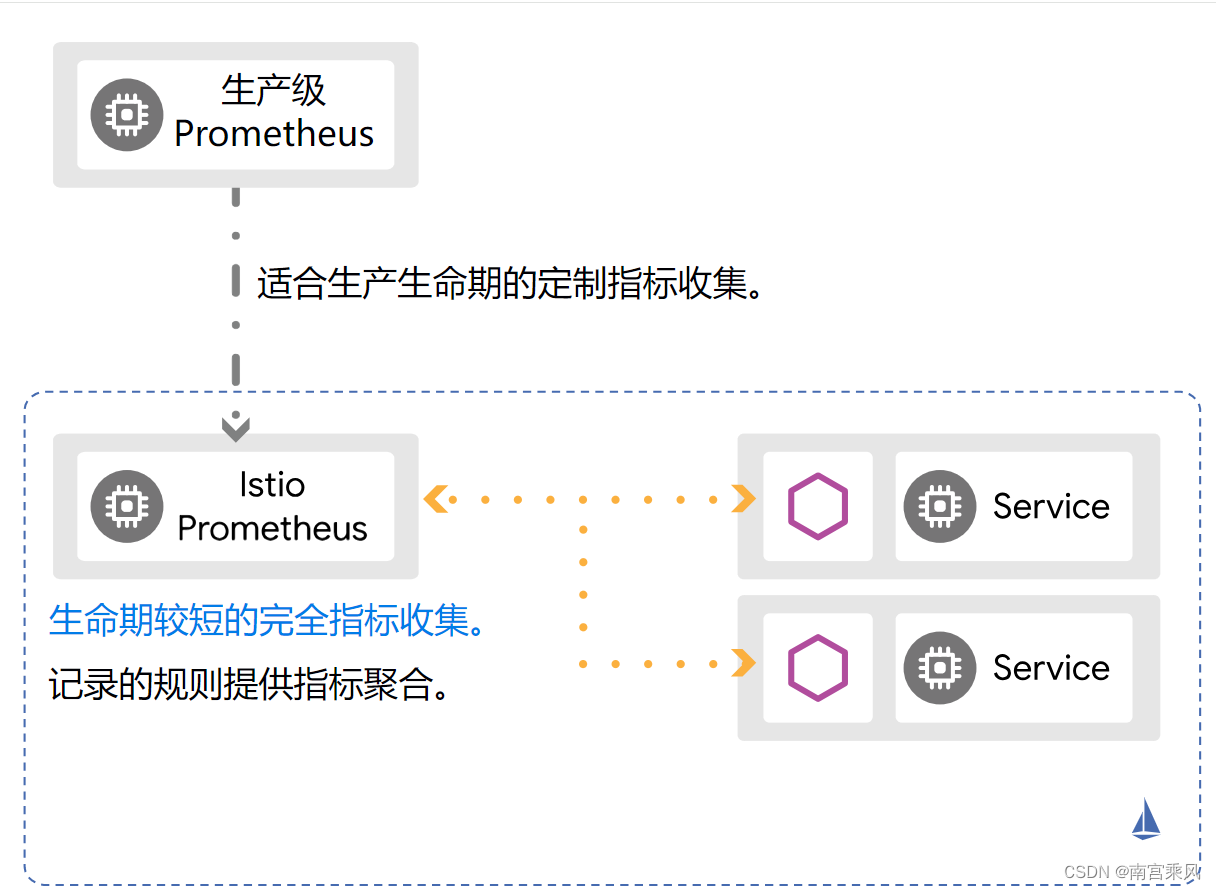

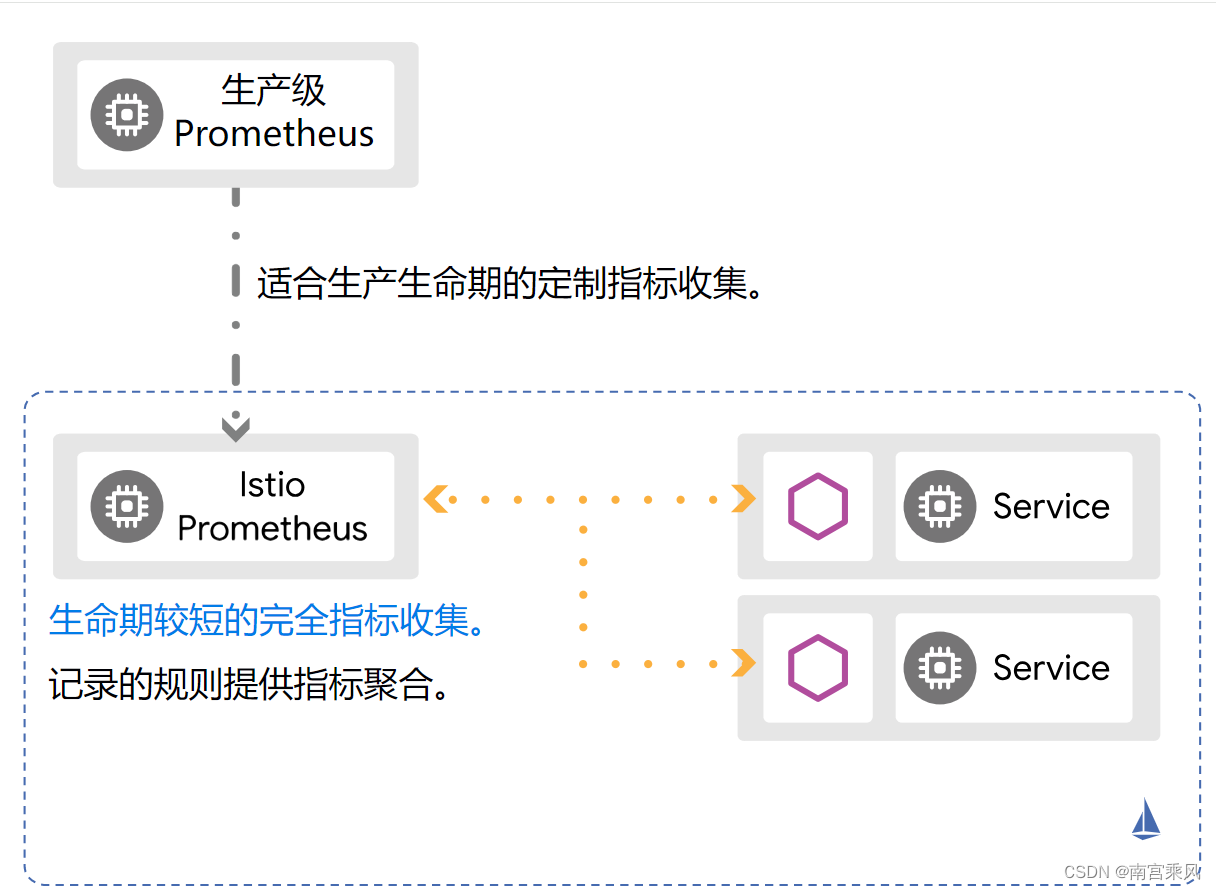

推荐 Istio 多集群监控使用 Prometheus,其主要原因是基于 Prometheus 的分层联邦(Hierarchical Federation)。

通过 Istio 部署到每个集群中的 Prometheus 实例作为初始收集器,然后将数据聚合到网格层次的 Prometheus 实例上。 网格层次的 Prometheus 既可以部署在网格之外(外部),也可以部署在网格内的集群中。

使用 Istio 以及 Prometheus 进行生产规模的监控时推荐的方式是使用分层联邦并且结合一组记录规则。

尽管安装 Istio 不会默认部署 Prometheus,入门指导中 Option 1: Quick Start 的部署按照 Prometheus 集成指导安装了 Prometheus。 此 Prometheus 部署刻意地配置了很短的保留窗口(6 小时)。此快速入门 Prometheus 部署同时也配置为从网格上运行的每一个 Envoy 代理上收集指标,同时通过一组有关它们的源的标签(instance、pod 和 namespace)来扩充指标

使用负载级别的聚合指标进行联邦

为了建立 Prometheus 联邦,请修改您的 Prometheus 生产部署配置来抓取 Istio Prometheus 联邦终端的指标数据。

将以下的 Job 添加到配置中:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

|

- job_name: 'istio-prometheus'

honor_labels: true

metrics_path: '/federate'

kubernetes_sd_configs:

- role: pod

namespaces:

names: ['istio-system']

metric_relabel_configs:

- source_labels: [__name__]

regex: 'workload:(.*)'

target_label: __name__

action: replace

params:

'match[]':

- '{__name__=~"workload:(.*)"}'

- '{__name__=~"pilot(.*)"}'

|

如果您使用的是 Prometheus Operator,请使用以下的配置:

我这边就是使用 这种方式

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

|

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: istio-federation

labels:

app.kubernetes.io/name: istio-prometheus

spec:

namespaceSelector:

matchNames:

- istio-system

selector:

matchLabels:

app: prometheus

endpoints:

- interval: 30s

scrapeTimeout: 30s

params:

'match[]':

- '{__name__=~"workload:(.*)"}'

- '{__name__=~"pilot(.*)"}'

path: /federate

targetPort: 9090

honorLabels: true

metricRelabelings:

- sourceLabels: ["__name__"]

regex: 'workload:(.*)'

targetLabel: "__name__"

action: replace

|

1

2

3

4

5

6

|

kubectl apply -f istio-federation.yaml -n monitoring

[root@bt ~]# kubectl get servicemonitors.monitoring.coreos.com -n monitoring istio-federation

NAME AGE

istio-federation 44d

|

联邦配置的关键是首先匹配通过 Istio 部署的 Prometheus 中收集 Istio 标准指标的 Job。并且将收集到的指标重命名,方法为去除负载等级记录规则命名前缀 (workload:)。 这使得现有的仪表盘以及引用能够无缝地针对生产用 Prometheus 继续工作(并且不在指向 Istio 实例)。

您可以在设置联邦时包含额外的指标(例如 envoy、go 等)。

控制面指标也被生产用 Prometheus 收集并联邦。

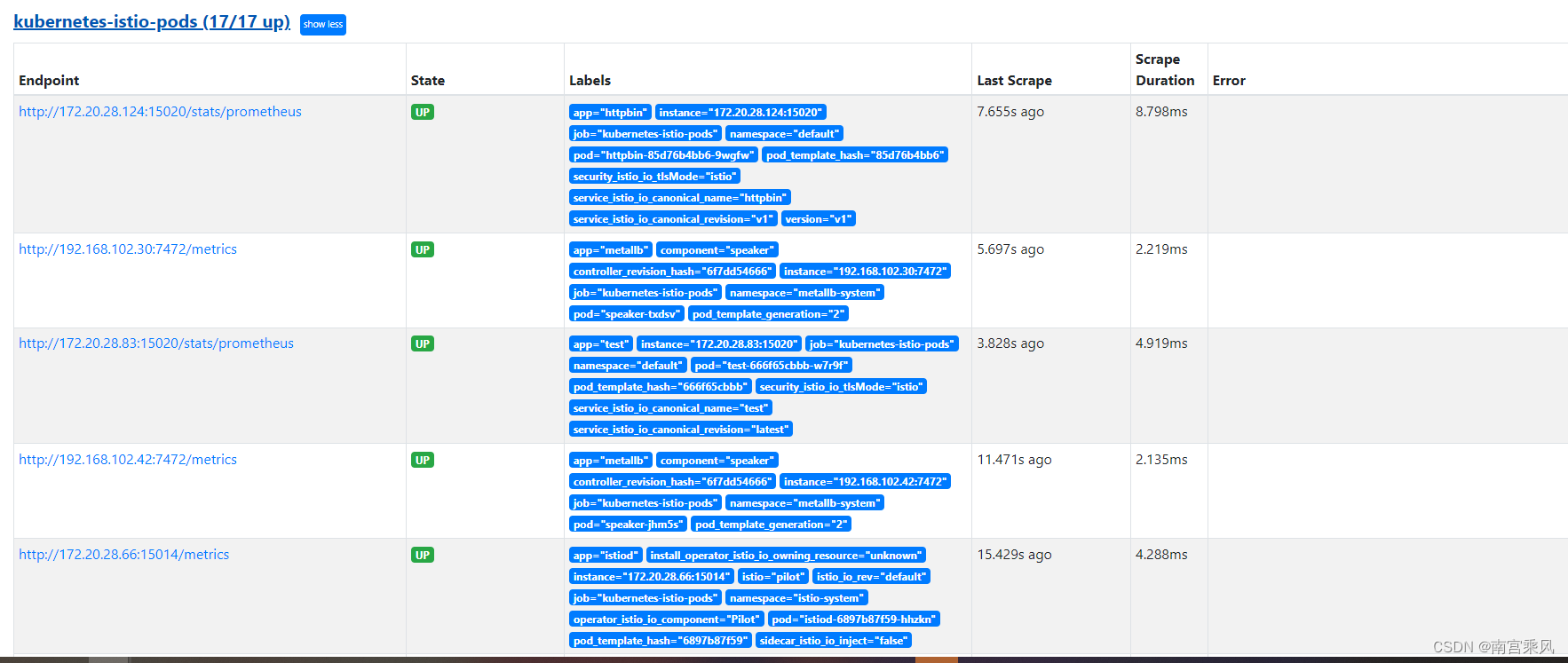

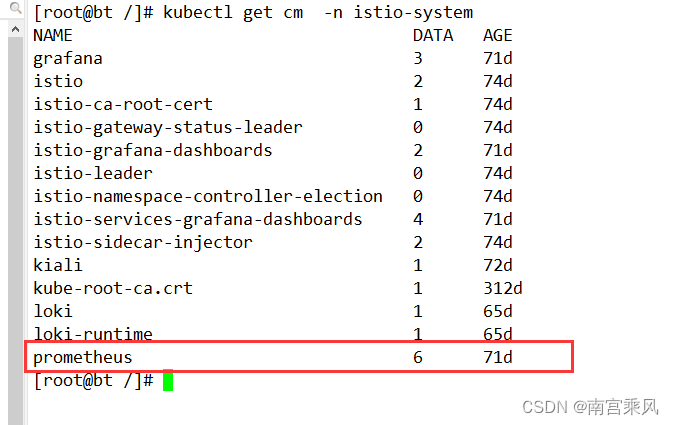

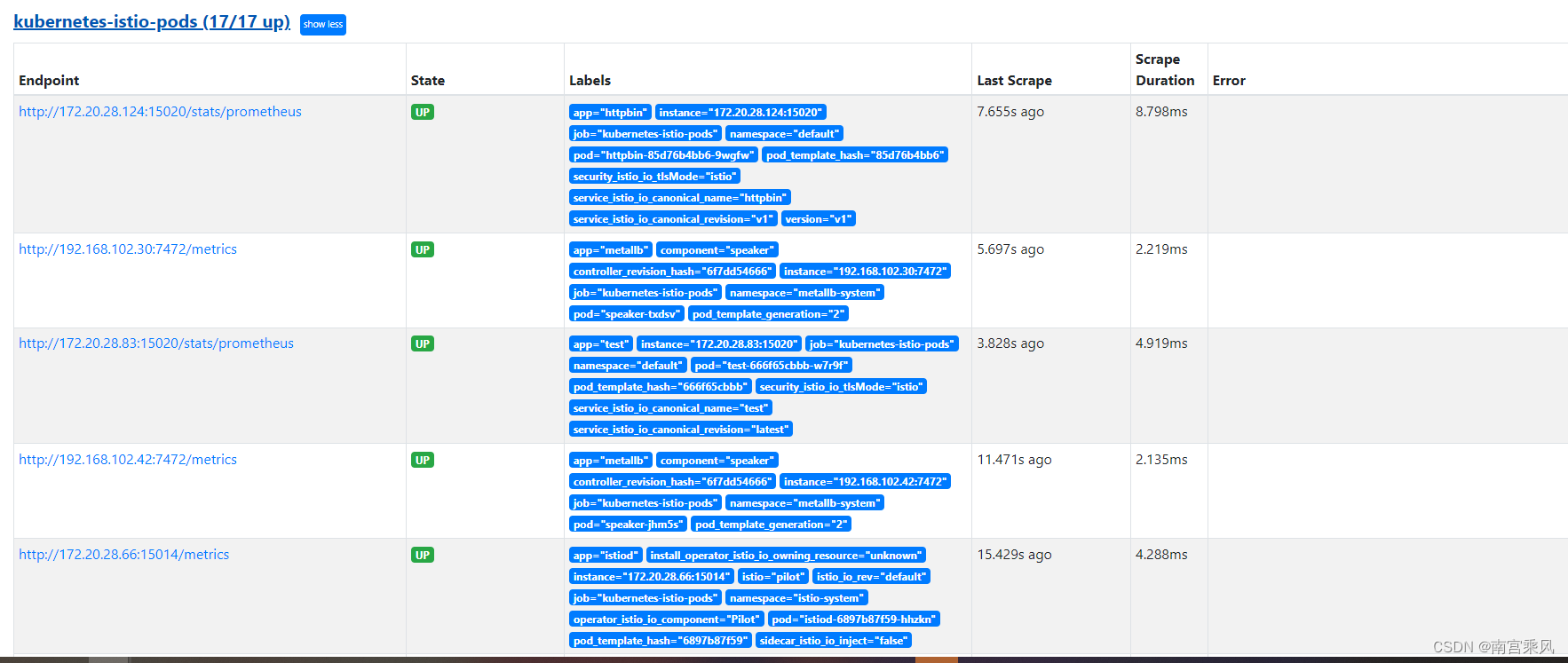

配置文件监控kubernetes-istio-pods

Prometheus 的抓取作业配置文件,怎么实现 Prometheus 如何抓取和处理与 Kubernetes Istio pods 相关的指标数据?

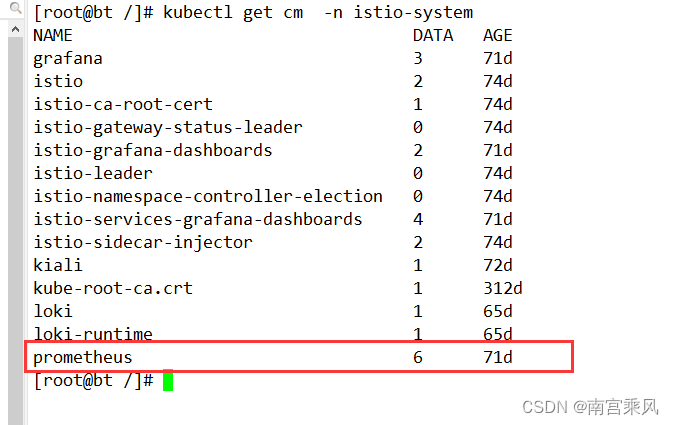

1、首先Istio内部集成安装Prometheus

2、我们可以去istio查看 人家的配置怎么写

3、根据人家的配置 ,修改一下,集成到Prometheus上

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

|

global:

evaluation_interval: 1m

scrape_interval: 15s

scrape_timeout: 10s

rule_files:

- /etc/config/recording_rules.yml

- /etc/config/alerting_rules.yml

- /etc/config/rules

- /etc/config/alerts

scrape_configs:

- job_name: prometheus

static_configs:

- targets:

- localhost:9090

- bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

job_name: kubernetes-apiservers

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- action: keep

regex: default;kubernetes;https

source_labels:

- __meta_kubernetes_namespace

- __meta_kubernetes_service_name

- __meta_kubernetes_endpoint_port_name

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

insecure_skip_verify: true

- bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

job_name: kubernetes-nodes

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- replacement: kubernetes.default.svc:443

target_label: __address__

- regex: (.+)

replacement: /api/v1/nodes/$1/proxy/metrics

source_labels:

- __meta_kubernetes_node_name

target_label: __metrics_path__

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

insecure_skip_verify: true

- bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

job_name: kubernetes-nodes-cadvisor

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- replacement: kubernetes.default.svc:443

target_label: __address__

- regex: (.+)

replacement: /api/v1/nodes/$1/proxy/metrics/cadvisor

source_labels:

- __meta_kubernetes_node_name

target_label: __metrics_path__

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

insecure_skip_verify: true

- honor_labels: true

job_name: kubernetes-service-endpoints

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- action: keep

regex: true

source_labels:

- __meta_kubernetes_service_annotation_prometheus_io_scrape

- action: drop

regex: true

source_labels:

- __meta_kubernetes_service_annotation_prometheus_io_scrape_slow

- action: replace

regex: (https?)

source_labels:

- __meta_kubernetes_service_annotation_prometheus_io_scheme

target_label: __scheme__

- action: replace

regex: (.+)

source_labels:

- __meta_kubernetes_service_annotation_prometheus_io_path

target_label: __metrics_path__

- action: replace

regex: (.+?)(?::\d+)?;(\d+)

replacement: $1:$2

source_labels:

- __address__

- __meta_kubernetes_service_annotation_prometheus_io_port

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_service_annotation_prometheus_io_param_(.+)

replacement: __param_$1

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- action: replace

source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- action: replace

source_labels:

- __meta_kubernetes_service_name

target_label: service

- action: replace

source_labels:

- __meta_kubernetes_pod_node_name

target_label: node

- honor_labels: true

job_name: kubernetes-service-endpoints-slow

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- action: keep

regex: true

source_labels:

- __meta_kubernetes_service_annotation_prometheus_io_scrape_slow

- action: replace

regex: (https?)

source_labels:

- __meta_kubernetes_service_annotation_prometheus_io_scheme

target_label: __scheme__

- action: replace

regex: (.+)

source_labels:

- __meta_kubernetes_service_annotation_prometheus_io_path

target_label: __metrics_path__

- action: replace

regex: (.+?)(?::\d+)?;(\d+)

replacement: $1:$2

source_labels:

- __address__

- __meta_kubernetes_service_annotation_prometheus_io_port

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_service_annotation_prometheus_io_param_(.+)

replacement: __param_$1

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- action: replace

source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- action: replace

source_labels:

- __meta_kubernetes_service_name

target_label: service

- action: replace

source_labels:

- __meta_kubernetes_pod_node_name

target_label: node

scrape_interval: 5m

scrape_timeout: 30s

- honor_labels: true

job_name: prometheus-pushgateway

kubernetes_sd_configs:

- role: service

relabel_configs:

- action: keep

regex: pushgateway

source_labels:

- __meta_kubernetes_service_annotation_prometheus_io_probe

- honor_labels: true

job_name: kubernetes-services

kubernetes_sd_configs:

- role: service

metrics_path: /probe

params:

module:

- http_2xx

relabel_configs:

- action: keep

regex: true

source_labels:

- __meta_kubernetes_service_annotation_prometheus_io_probe

- source_labels:

- __address__

target_label: __param_target

- replacement: blackbox

target_label: __address__

- source_labels:

- __param_target

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- honor_labels: true

job_name: kubernetes-pods

kubernetes_sd_configs:

- role: pod

relabel_configs:

- action: keep

regex: true

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_scrape

- action: drop

regex: true

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_scrape_slow

- action: replace

regex: (https?)

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_scheme

target_label: __scheme__

- action: replace

regex: (.+)

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_path

target_label: __metrics_path__

- action: replace

regex: (\d+);(([A-Fa-f0-9]{1,4}::?){1,7}[A-Fa-f0-9]{1,4})

replacement: '[$2]:$1'

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_port

- __meta_kubernetes_pod_ip

target_label: __address__

- action: replace

regex: (\d+);((([0-9]+?)(\.|$)){4})

replacement: $2:$1

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_port

- __meta_kubernetes_pod_ip

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_annotation_prometheus_io_param_(.+)

replacement: __param_$1

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- action: replace

source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- action: replace

source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- action: drop

regex: Pending|Succeeded|Failed|Completed

source_labels:

- __meta_kubernetes_pod_phase

- honor_labels: true

job_name: kubernetes-pods-slow

kubernetes_sd_configs:

- role: pod

relabel_configs:

- action: keep

regex: true

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_scrape_slow

- action: replace

regex: (https?)

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_scheme

target_label: __scheme__

- action: replace

regex: (.+)

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_path

target_label: __metrics_path__

- action: replace

regex: (\d+);(([A-Fa-f0-9]{1,4}::?){1,7}[A-Fa-f0-9]{1,4})

replacement: '[$2]:$1'

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_port

- __meta_kubernetes_pod_ip

target_label: __address__

- action: replace

regex: (\d+);((([0-9]+?)(\.|$)){4})

replacement: $2:$1

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_port

- __meta_kubernetes_pod_ip

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_annotation_prometheus_io_param_(.+)

replacement: __param_$1

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- action: replace

source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- action: replace

source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- action: drop

regex: Pending|Succeeded|Failed|Completed

source_labels:

- __meta_kubernetes_pod_phase

scrape_interval: 5m

scrape_timeout: 30s

|

上面是人家的写的配置,修改配置为下方

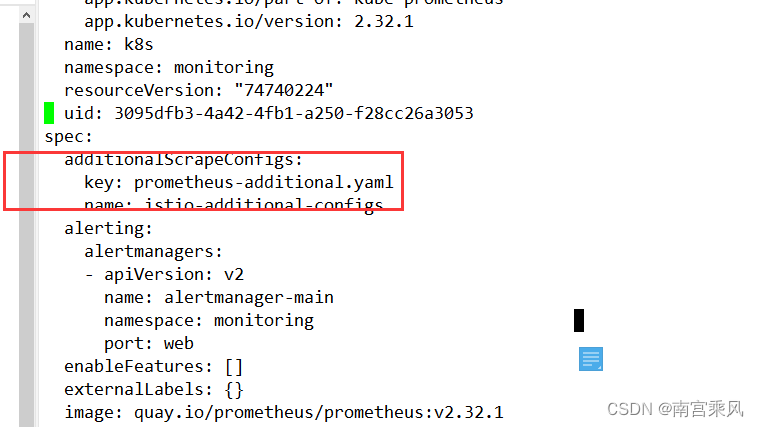

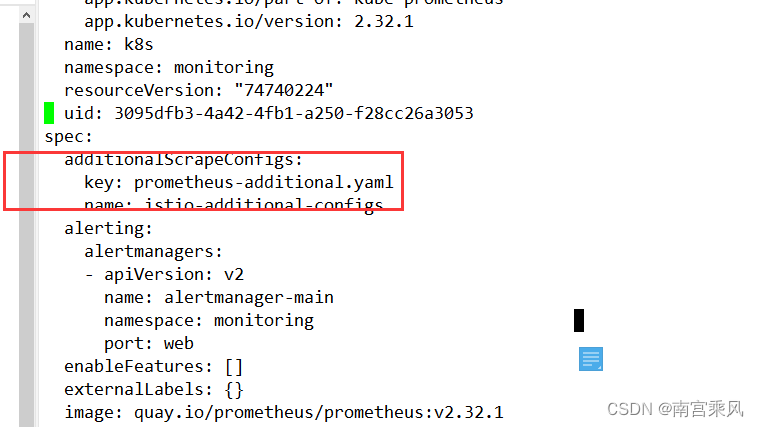

在prometheus-prometheus.yaml的配置文件中,官方内置了一个字段additionalScrapeConfigs用于添加自定义的抓取目标

https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#PrometheusSpec

所以只需要按照官方的要求写入配置即可。新建额外抓取的信息prometheus-additional.yaml:

prometheus-additional.yaml

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

|

- job_name: kubernetes-istio-pods

honor_labels: true

honor_timestamps: true

scrape_interval: 15s

scrape_timeout: 10s

metrics_path: /metrics

scheme: http

follow_redirects: true

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

separator: ;

regex: "true"

replacement: $1

action: keep

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape_slow]

separator: ;

regex: "true"

replacement: $1

action: drop

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scheme]

separator: ;

regex: (https?)

target_label: __scheme__

replacement: $1

action: replace

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

separator: ;

regex: (.+)

target_label: __metrics_path__

replacement: $1

action: replace

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_port, __meta_kubernetes_pod_ip]

separator: ;

regex: (\d+);(([A-Fa-f0-9]{1,4}::?){1,7}[A-Fa-f0-9]{1,4})

target_label: __address__

replacement: '[$2]:$1'

action: replace

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_port, __meta_kubernetes_pod_ip]

separator: ;

regex: (\d+);((([0-9]+?)(\.|$)){4})

target_label: __address__

replacement: $2:$1

action: replace

- separator: ;

regex: __meta_kubernetes_pod_annotation_prometheus_io_param_(.+)

replacement: __param_$1

action: labelmap

- separator: ;

regex: __meta_kubernetes_pod_label_(.+)

replacement: $1

action: labelmap

- source_labels: [__meta_kubernetes_namespace]

separator: ;

regex: (.*)

target_label: namespace

replacement: $1

action: replace

- source_labels: [__meta_kubernetes_pod_name]

separator: ;

regex: (.*)

target_label: pod

replacement: $1

action: replace

- source_labels: [__meta_kubernetes_pod_phase]

separator: ;

regex: Pending|Succeeded|Failed|Completed

replacement: $1

action: drop

kubernetes_sd_configs:

- role: pod

kubeconfig_file: ""

follow_redirects: true

|

抓取的目标制定为targets列表,创建secret对象istio-additional-configs引用该配置

1

|

kubectl create secret generic `istio-additional-configs` --from-file=./prometheus-additional.yaml -n monitoring

|

1

2

3

4

5

6

|

kubectl edit prometheus -n monitoring

spec:

additionalScrapeConfigs:

key: prometheus-additional.yaml

name: istio-additional-configs

|

或者

或者

在promethes-prometheus.yaml中添加additionalScrapeConfigs引用创建的secret

1

2

3

4

5

6

7

|

serviceAccountName: prometheus-k8s

serviceMonitorNamespaceSelector: {}

serviceMonitorSelector: {}

version: v2.11.0

additionalScrapeConfigs:

name: ingress-nginx-additional-configs

key: prometheus-additional.yaml

|

重建promethes配置:

1

|

$ kubectl delete -f ./prometheus-prometheus.yaml && kubectl apply -f ./prometheus-prometheus.yaml

|

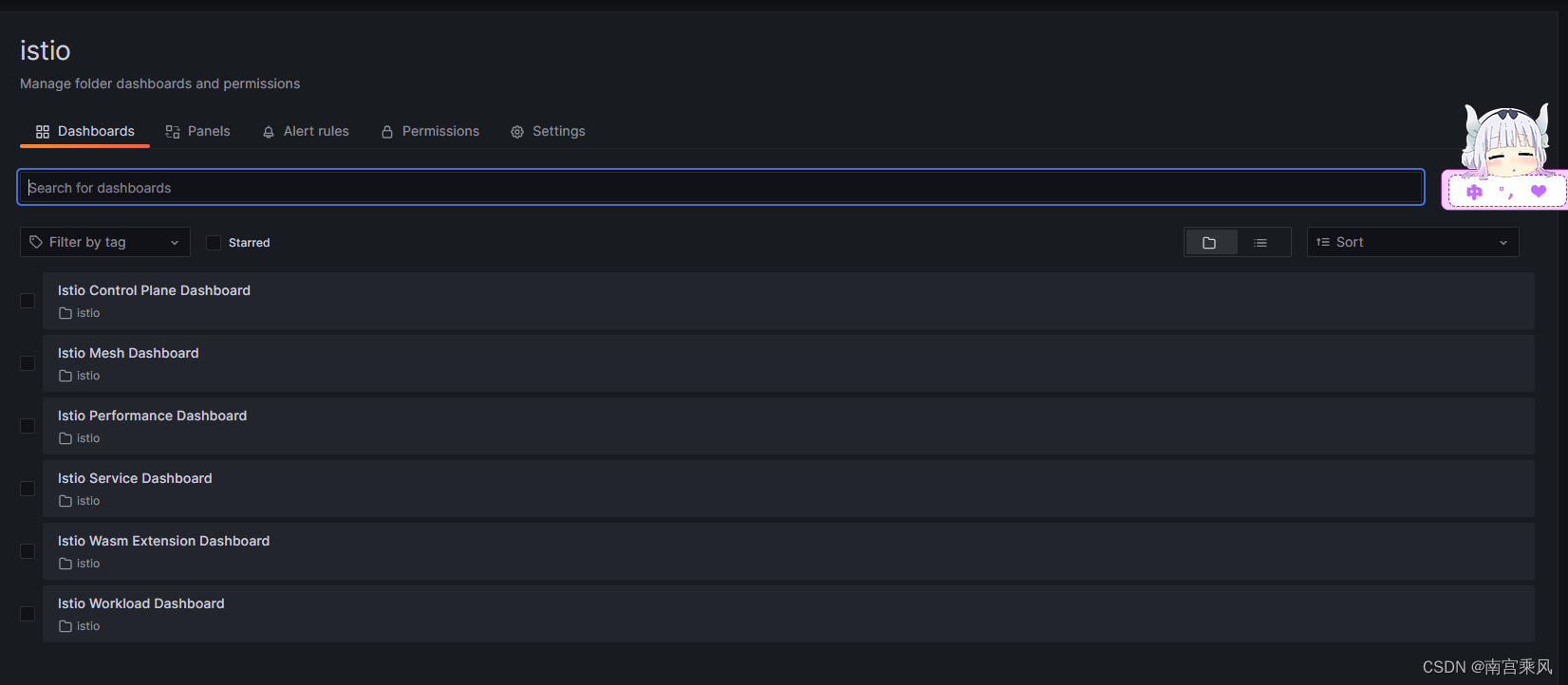

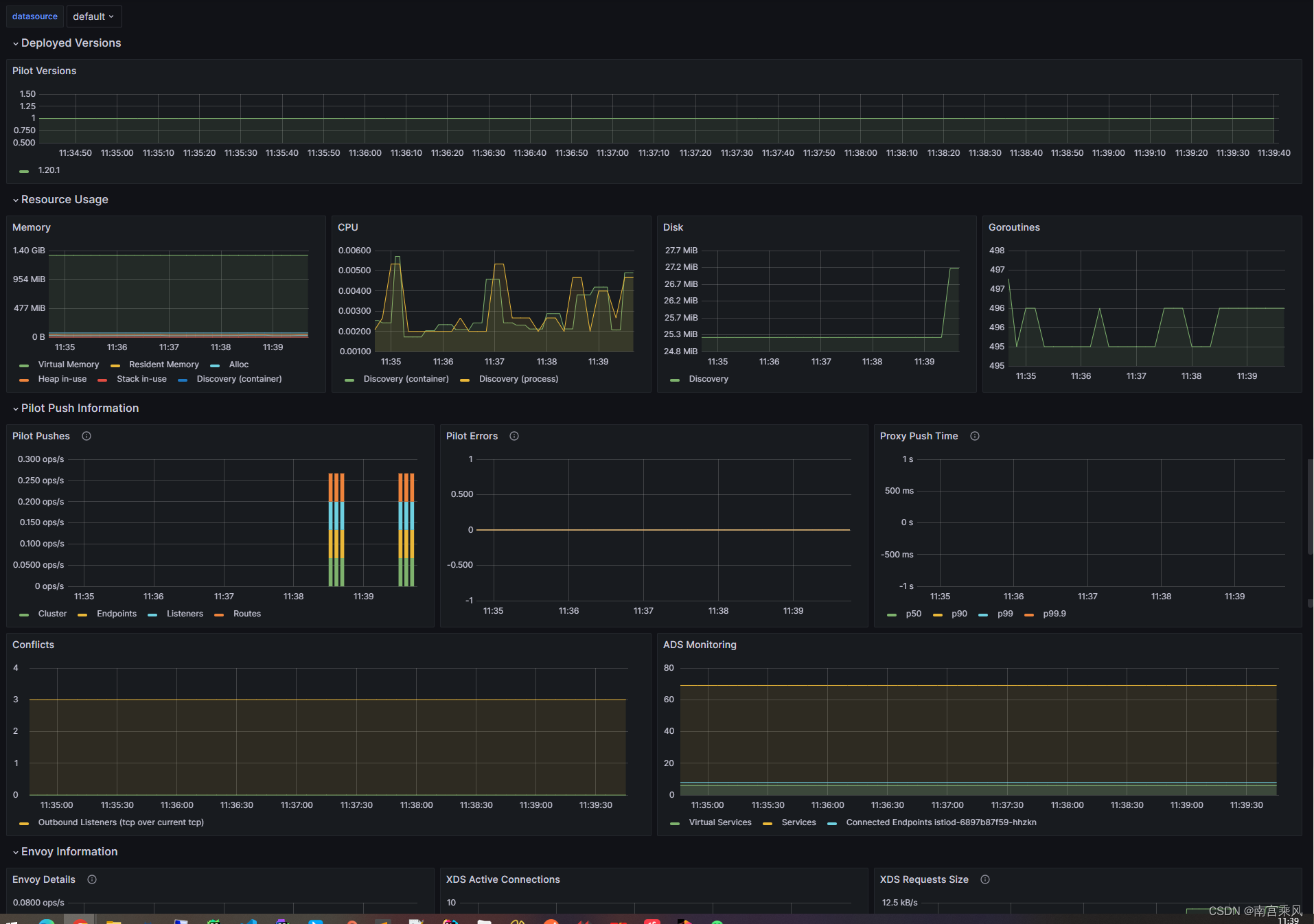

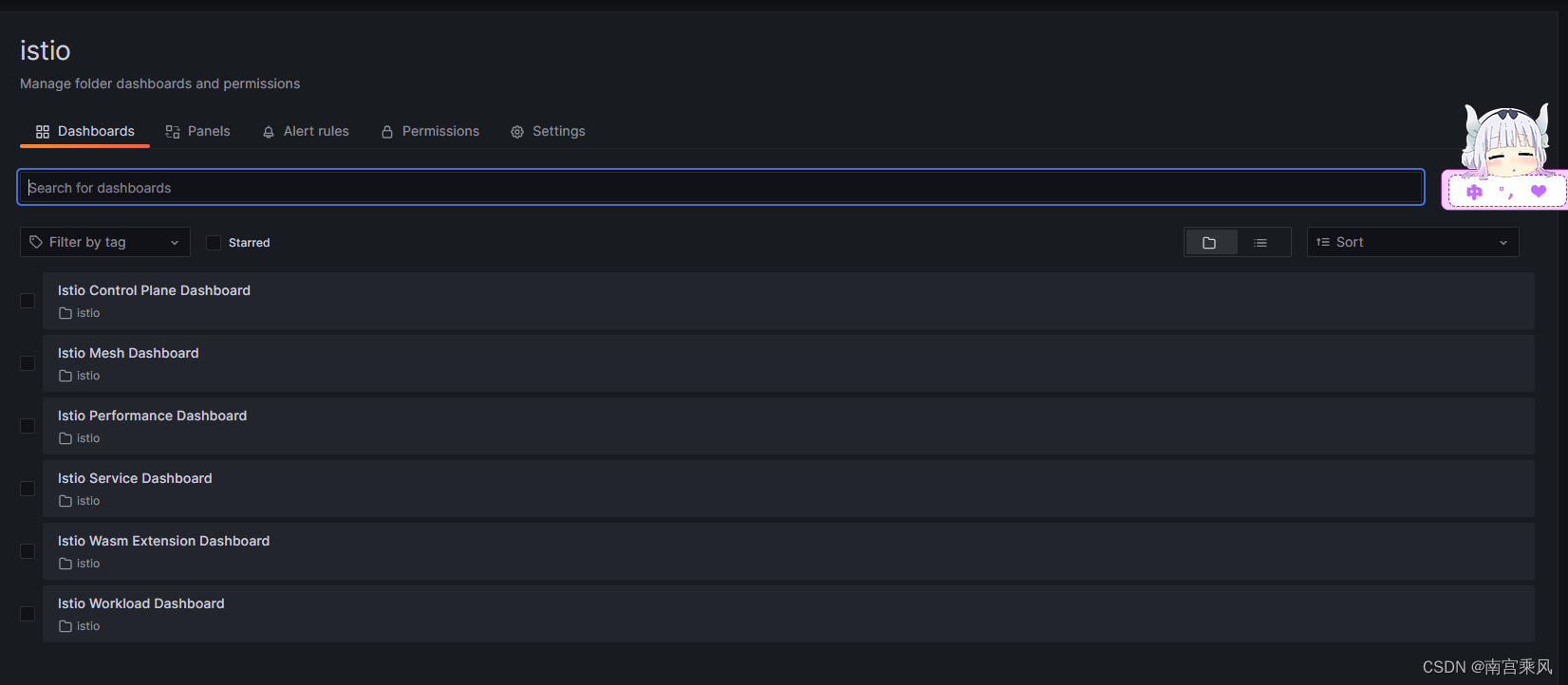

Grafan监控

关于Grafan的监控面板,可以去官方进行寻找

https://grafana.com/grafana/dashboards/

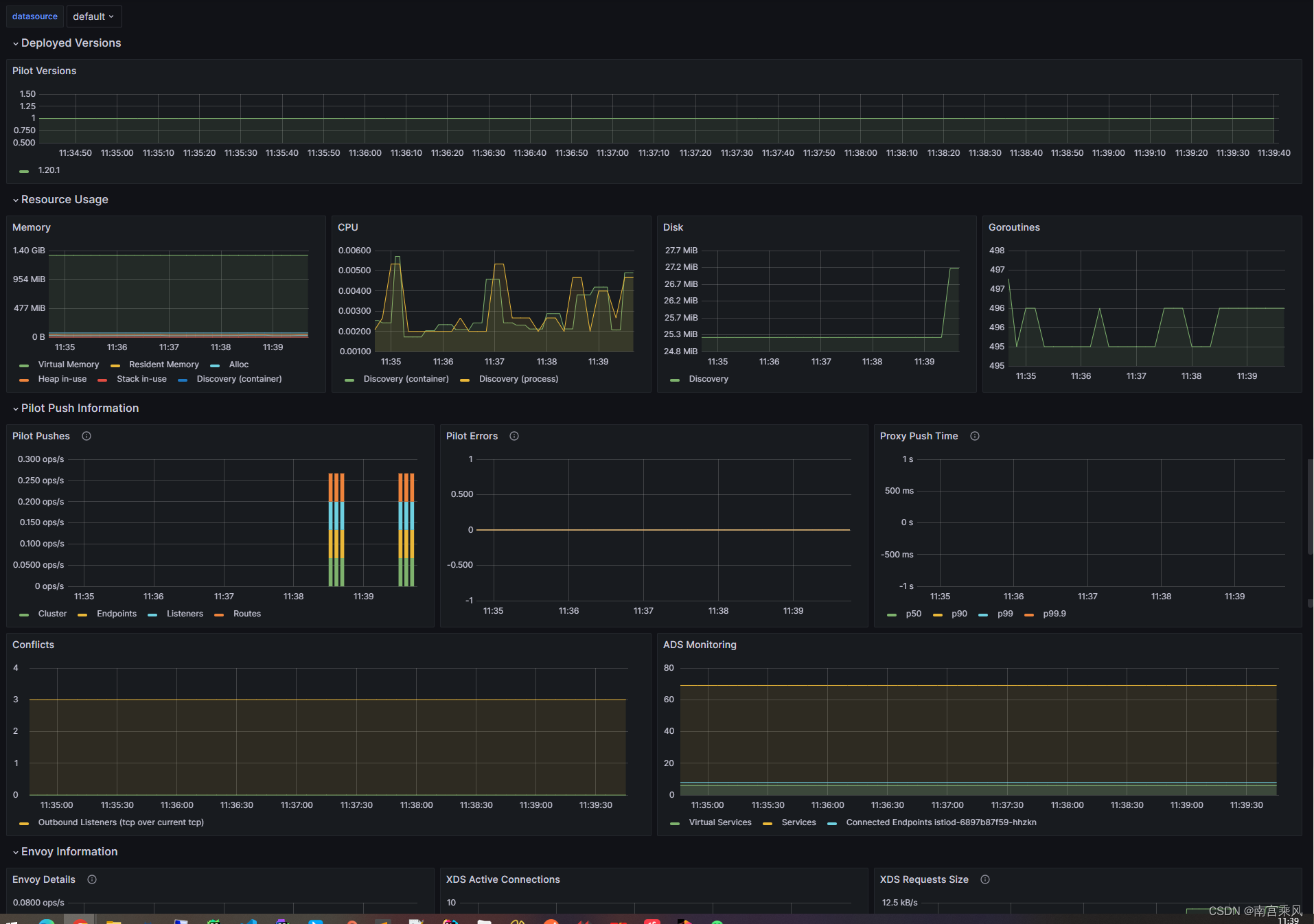

Istio也提供了自身平台的监控大盘,如下:

可以看出Istio的默认监控大盘非常全面,该监控的都监控起来了

可以看出Istio的默认监控大盘非常全面,该监控的都监控起来了

参考文档:https://www.cnblogs.com/justmine/p/12269587.html

参考文档:https://www.cnblogs.com/justmine/p/12269587.html

或者

或者

可以看出Istio的默认监控大盘非常全面,该监控的都监控起来了

可以看出Istio的默认监控大盘非常全面,该监控的都监控起来了

参考文档:https://www.cnblogs.com/justmine/p/12269587.html

参考文档:https://www.cnblogs.com/justmine/p/12269587.html